Julie Kowald, UTS Rapido Social Impact

Australian

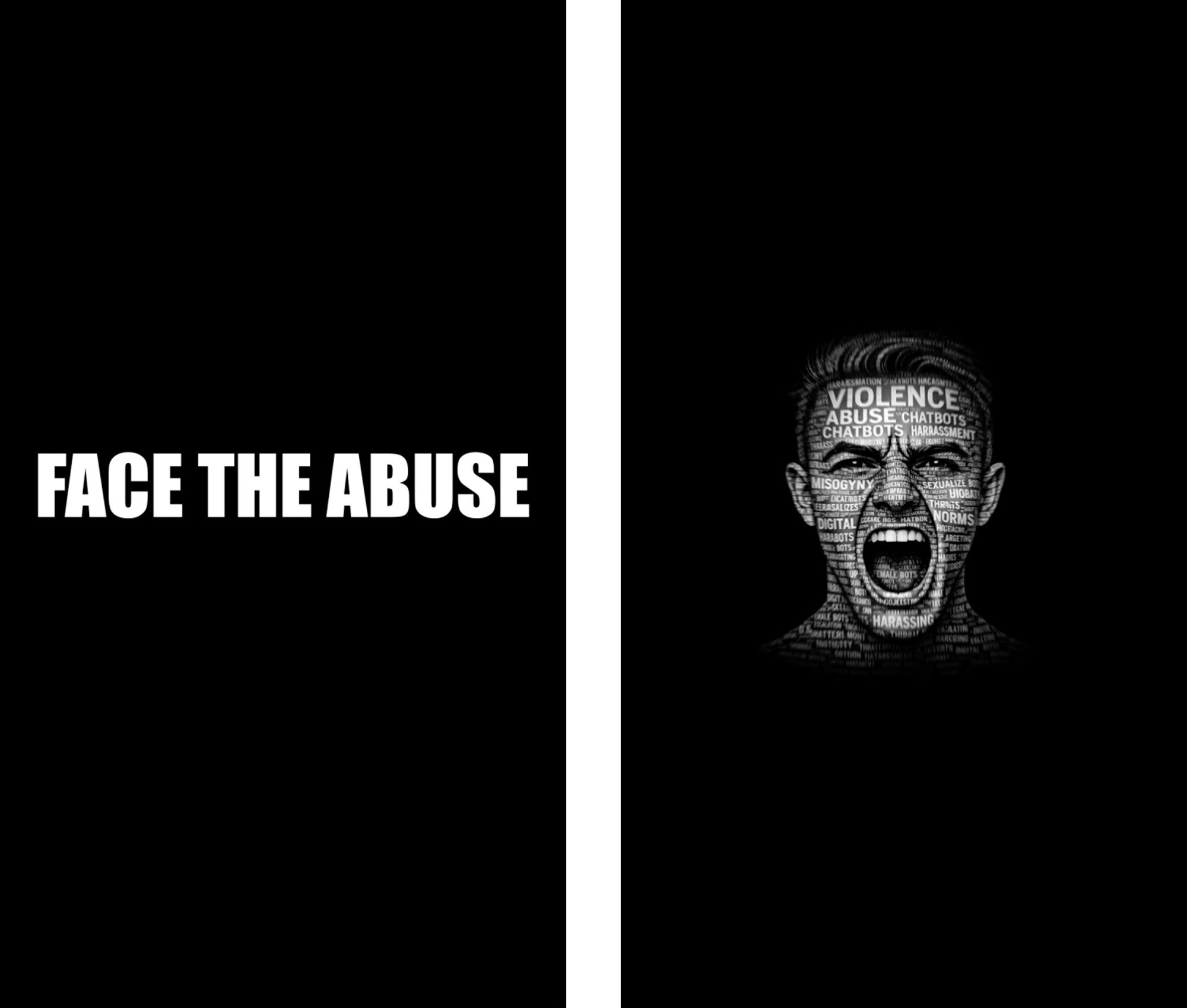

Face the Abuse (2026)

Digital Video

Chapter

summary

Artificial intelligence has long been seen as a man’s world, dominated by figures like Zuckerberg, Musk and Bezos. Yet women such as Ada Lovelace, who wrote the first…

Artist

statement

Face the Abuse is an immersive installation featuring a realistic AI chatbot that delivers a scripted account of the scale and impact of gender-based violence.…

Prof. Vijeyarasa's

reflections

Julie and I wanted to focus attention on the documented harassment perpetrated against virtual technologies. We were at times frustrated and at others alarmed…

Content warning for video below

Face the Abuse talks about gender-based violence and uses abusive language that has been directed at AI technologies (for example Siri and Alexa). The purpose is to illustrate how abusive language in this context may normalise violence against women in society.

This experience is provided visually and via audio. It uses language that is offensive and may be shocking to hear or to see.

For some, this may be re-traumatising or upsetting. Your response may be particular to your lived experience. In some case you may be surprised by your own response, so take your time and be kind to yourself.

Options for viewers to manage their experience

If you find that the content is re-traumatising or upsetting, you have the option to stop watching this recorded content at any time.

Alternatively, if you choose to continue with this audio-visual experience, you can remove your headphones or silence the sound.

Chapter summary

Artificial Intelligence: Algorithmic Accountability Through an Intersectional Gender Lens

Artificial intelligence has long been seen as a man’s world, dominated by figures like Zuckerberg, Musk and Bezos. Yet women such as Ada Lovelace, who wrote the first computer program in the 1800s and Mary Allen Wilkes, who programmed LINC in the 1960s, reflect a different history. Today, the problem extends beyond the lack of women in tech: technology is not neutral. Hiring tools favour men, deepfakes target women and feminised voice assistants like Alexa, Siri and Cortana reinforce stereotypes that women exist to serve. These systems shape how we think about gender and normalise inequality. Because AI systems learn from biased data, discriminatory outcomes persist. Technical fixes are insufficient without cultural change and strong legal frameworks demanding accountability. Feminist approaches to AI design and governance are essential to dismantle stereotypes and ensure technology promotes equality rather than replicates discrimination.

Open Access: (Read online or download free)

Artist statement

Face the Abuse is an immersive installation featuring a realistic AI chatbot that delivers a scripted account of the scale and impact of gender-based violence directed toward digital assistants. The work examines how patterns of harassment, sexualisation, and dehumanisation—long established in the physical world—are being rehearsed, normalised and amplified through everyday interactions with artificial intelligence.

The artwork is experienced inside a darkened, acoustically dampened booth designed to isolate the viewer from external distraction. This controlled environment creates an intimate, one-on-one encounter in which the digital assistant addresses each audience member directly. The absence of ambient noise and light heightens focus, collapsing the distance between speaker and listener and replicating the conditions under which abuse toward digital systems most often occurs: privately, casually and without witnesses.

As the AI delivers its message, the screen presents real instances of abusive messages drawn from documented interactions with widely used digital assistants, including Siri, Cortana, Alexa, ChatGPT and others. These messages are shown verbatim. Alongside them appear representational human faces—that is, constructed images, not the actual photographs of perpetrators. This ethical substitution preserves anonymity while making visible the human agency behind each interaction.

This confrontation is central to the work. Abuse directed at digital assistants is often dismissed as harmless, humorous or inconsequential. By attaching language to faces, Face the Abuse refuses that dismissal. The audience is required to acknowledge that these interactions are not abstract data points or technical anomalies, but intentional acts performed by people. The title reflects this demand: viewers must literally face the abuse, both in language and in form.

The work does not frame the AI as a victim, nor does it seek empathy for machines. Instead, it asks what these interactions reveal about human behaviour, power, and accountability. When digital assistants are gendered, compliant, and endlessly patient, they become sites where misogyny and aggression are exercised without consequence. Practised repeatedly, this behaviour risks becoming normalised—reshaping expectations of consent, response, and respect.

As the creator of Face the Abuse, I position digital harassment as part of a broader continuum of gender-based violence. I have been a software engineering for over two decades, where I have witnessed gendered patterns engrained in the technology sector. Creating this work I have come to realise the consequences of feminising virtual assistants and the extent to which abuse is tolerated in digital spaces—whether in homes, classrooms, workplaces, or banking systems. We also risk that it does not remain contained. It conditions behaviour, reinforces entitlement and blurs the boundary between virtual and real-world harm.

The work ultimately calls for recognition rather than resolution. By slowing the encounter, isolating the viewer and removing the possibility of distance or irony,Face the Abuseasks each participant to confront their own relationship to these systems and to the behaviours they enable. The message is direct: this is not someone else’s problem. This is behaviour we must all be willing to face.

Bibliography: Download here

Prof. Vijeyarasa's reflections

Julie and I wanted to focus attention on the documented harassment perpetrated against virtual technologies. We were at times frustrated and at others alarmed by the abusive language captured in the research on sexual abuse against bots and virtual assistants, as well as the scope of the problem. Like all of my work with Rapido Social Impact, our collaboration showed what can be achieved when disciplines come together, while reflecting our own experiences, as women and parents in a world where AI has truly become everyday. The evidence-base behind the risk that harassment against virtual feminised assistants will normalise harassment in real life is still weak. Recognising this gap, this work tries to engage viewers to think about the racialized and gendered nature of harassment and our own roles as users of these technologies.